Sometimes, we get lazy. Or mask it under “productivity” or even “DRY”. We’ve already got a passing test, we look at it, and we say: for my next test, I’ll need the same “Arrange” and “Act” parts, the only thing different is the “Assert” part. I know! We can reuse the test, and add another assert, or two, or five to the test!

And now we have a test that looks like this:

|

1 2 3 |

<font face="Consolas"><font style="font-size: 14.3pt">[<span style="color: "><font color="#2b91af">TestMethod</font></span>] <span style="color: "><font color="#0000ff">public</font></span> <span style="color: "><font color="#0000ff">void</font></span> Mix_DogAndCat_CatIsDog() { <span style="color: "><font color="#008000">// Arrange</font></span> <span style="color: "><font color="#2b91af">Animal</font></span> cat = <span style="color: "><font color="#0000ff">new</font></span> <span style="color: "><font color="#2b91af">Animal</font></span>() { IsCat = <span style="color: "><font color="#0000ff">true</font></span> }; <span style="color: "><font color="#2b91af">Animal</font></span> dog = <span style="color: "><font color="#0000ff">new</font></span> <span style="color: "><font color="#2b91af">Animal</font></span>() { IsDog = <span style="color: "><font color="#0000ff">true</font></span> }; <span style="color: "><font color="#008000">// Act</font></span> <span style="color: "><font color="#2b91af">AnimalMixer</font></span> mixer = <span style="color: "><font color="#0000ff">new</font></span> <span style="color: "><font color="#2b91af">AnimalMixer</font></span>(); mixer.Add(cat); mixer.Add(dog); mixer.Mix(); <span style="color: "><font color="#008000">// Assert</font></span> <span style="color: "><font color="#2b91af">Assert</font></span>.IsTrue(cat.IsDog);</font></font> |

|

1 |

<font face="Consolas"><font style="font-size: 14.3pt">}</font></font> |

To understand why this is a problem, we need to understand what really happens when a test fails. Depending on the framework and language, the Assert API you use (and if you don’t, go read AA Tests) throws an exception. The test framework runs the tests in a context of a try-catch, so it can catch the exception and fails the test, and we get a nice red icon.

What happens if the first Assert throws an exception? Obviously the test fails, as it should. But what we’re missing is the result of the rest of the asserts. Because of the exception, we didn’t reach the rest of the assertions.

What’s wrong with this?

Having a failing test is ok. What’s not ok, is that we’re giving up the rights for more information. Information that can shorten the gap between found-a-bug and fix-a-bug.

Would it change our perception of the problem if we knew all asserts are failing? What if all pass , apart from the first one, that fails?

We’re missing a few pieces of the puzzle that we could have, if we had separate tests, similar in setup, but with different asserts. The first failure is like a giant spotlight, that obscures the rest of the information around it.

It has a side effect – you’ll get lousy names for your tests. The usual symptom is that the test name doesn’t describe what the test really checks. So when the test fails, you only check for one reason, and miss the others.

What’s the solution?

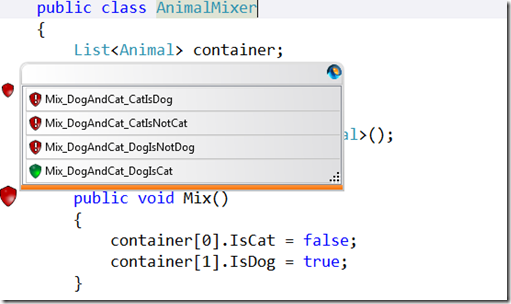

Separate the tests.

Each test has a different descriptive name and a matching assert. When you run your tests, you’ll know which cases failed and which passed. And the side effect this time is that you have all the information in your finger tips from the test report, because you’ve got a list of names.

(Note: If you really are worried about DRY (and you shouldn’t be) extract a separate method and call it from all related tests. )

Is there an escape clause?

When you’re looking at a test that changes the properties of the same object, you might be tempted to put the different Asserts in the same test. Something like:

My suggestion: Don’t. The risk of missing information is greater than your sense of holistic tests. The test name should help here too: If you feel the name is aligned with the asserts, go ahead. But don’t say I didn’t warn you.

But what if my setup takes a lot of time?

That’s a better question. But now we’ve moved from unit test land into integration test land. After you’ve crossed the border, performance does play a role, that might persuade you to cut corners. Just know what you’re missing.

So, how do I identify the situation?

This one should stand out, like in your face. But if you’re not sure, grab the nearest ear and review the tests. And, if you’re using Isolator, it will tell you if your test is a Check-It-All.